Author: Jonathan Michaux, Senior Product Manager, Gravitee

Bio: Jonathan is a Senior Product Manager at Gravitee, the company that builds the event-native full lifecycle API management platform. He has worked on multiple enterprise software products at companies like Talend, Restlet, and TriggerMesh. His mission has consistently revolved around delivering transformative tools for developers. Jonathan holds a Ph.D. in computer science from l’Institut Mines-Télécom in Paris, specializing in formal methods for concurrent systems, and is the co-author of the O’Reilly Kubernetes Cookbook 2nd Edition (2023).

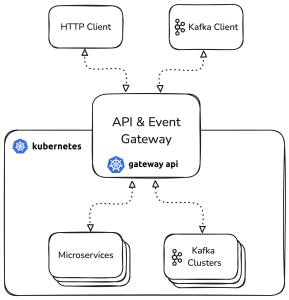

The Kubernetes Gateway API does not offer a protocol-aware solution for managing Kafka traffic. This article discusses a proposal for a Kafka extension to the Gateway API to enable secure, cloud-native access to Kafka.

What is the Kubernetes Gateway API?

The Kubernetes Gateway API is the preferred method for specifying how traffic flows from clients outside a Kubernetes cluster to services running inside the cluster (north/south traffic), as well as how services can communicate inside a cluster (east/west traffic). A growing list of vendors support this CNCF-governed standard, and end-users are drawn to the promise of increased portability and reduced vendor lock-in.

The Gateway API currently covers HTTP and gRPC traffic, with experimental work under way for managing TLS, TCP and UDP traffic. One of the API’s core objectives is to improve the separation of concerns between infrastructure providers, platform operators, and application developers. On the one hand, the GatewayClass and Gateway resources allow cluster admins to define common configurations, such as selecting the actual gateway technology to be used, in which version etc… On the other hand, resources like HTTPRoute enable developers to manage the way traffic is routed to their services. This fosters a shift-left mentality, but with central guardrails.

Let’s take a look at the HTTPRoute resource to see what this spec can actually do. It can, for instance, be used to implement request header-based routing, like sending requests with a specific User-Agent to a specific backend.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

Unset

apiVersion: gateway.networking.k8s.io/v1beta1

kind: HTTPRoute

metadata:

name: header-routing

spec:

parentRefs:

- name: my-gateway

rules:

- matches:

- headers:

- name: User-Agent

type: RegularExpression

value: "^MobileApp.*"

backendRefs:

- name: mobile-backend

- backendRefs:

- name: default-backend

[/dm_code_snippet]

This HTTPRoute will result in requests from a mobile app (where Users Agent header starts with MobileApp) being routed to mobile-backend, while all others go to default-backend.

Notice how this logic has nothing vendor-specific about it. But of course, the Kubernetes Gateway API doesn’t actually implement the logic behind any of these rules, transformations, and other goodies that it provides. You need to pick a specific implementation to use (a controller, in Kubernetes parlance), which could be something like NGINX, Traefik, or the ones provided out of the box by the major Cloud Service Providers that support the Gateway API.

The Kubernetes Gateway API, by design, is extensible and open to ideas about different protocols that could be supported. TLS, TCP, UDP are some examples of Route types that are classified as “experimental” at time of writing but are already supported by multiple vendors.

Now we get to the interesting part, which is the idea that the Gateway API could be extended to natively support Kafka traffic. But why would anyone want to do such a thing? Please read on to find out!

What does Kafka have to do with the Gateway API?

I won’t expand on the obvious statement that Kafka is one of the most widely used technologies to implement event-driven architectures. The other increasingly obvious fact about Kafka is that it is not very good at (nor was it designed for) providing organisations with solutions to govern the way Kafka is used at enterprise-scale. Kafka is nearly 15 years old and for the longest time has been relegated to internal plumbing, viewed as a purely architectural component, and used by a specialised handful of employees that are fluent in these technologies and their data-centric use cases.

Now let’s fast forward to today’s realtime, data-centric world in which everyone wants to leverage the valuable data living in Kafka. Internal usage of Kafka is spreading, but there is also increased appetite to open event streams to partners and external customers.

Many of us are now experiencing some of the challenges that come with attempting to open up Kafka to new clients and applications. There is increased desire and pressure to do so, because data residing in those Kafka topics is a gold mine of useful data and services that the business could be putting to good use. But the idea of opening access to Kafka in this way is either creating significant governance and security challenges for companies attempting it, or is seen as a nascent pattern. All of this is due to the lack of tooling to ensure this can be done in a governed, secure way.

Why exactly is it challenging to expose Kafka to clients, like you would with traditional API management solutions? The management layer (or control plane) typically provided with Kafka solutions is not designed with neither broad internal access nor external consumption in mind. The granularity and flexibility of Kafka’s Access Control Lists (ACLs) make it daunting to dynamically manage access to data at the rate that an innovative business demands. Once access is provided, chaos often ensues as users create outrageous numbers of topics and clusters, with poor understanding of ownership or what type of data resides within.

The future demands a unified approach that offers equal management capabilities for both APIs and events, ensuring seamless discovery, governance, and monetization of event-driven data flows, just like their REST API counterparts. More and more technology leaders in IT are taking on responsibility for both APIs and event streams, and dream of being able to modernize and standardise the way they operate across both of these foundational capabilities.

But until recently, the idea that one technology could help manage both APIs and Event Streams was mostly unheard of.

Emergence of the Kafka Gateway pattern

The Kafka Gateway pattern describes an approach by which a software gateway can intercept Kafka traffic between a Kafka Broker and a Kafka Client. The Kafka Gateway must implement the Kafka protocol directly, meaning that clients of the gateway are Kafka consumers and producers, which communicate directly with the native Kafka protocol. Clients interact with and see the gateway as if it were a regular Kafka broker. It is conceptually analogous to an API gateway, only that it acts directly on the Kafka protocol level, rather than the HTTP protocol. You can also think of this pattern as a Kafka proxy.

Kafka Gateways make it easy to provide new Kafka clients with direct access to Kafka clusters, in a much faster and simpler way than before, while enforcing central security and governance guardrails. From an industry standpoint, this pattern is gaining traction, with increased demand

and vendors starting to support it. Solutions in this space are typically referred to as Event Gateways, Kafka Gateways, or Kafka Proxies. Some vendors support both HTTP and Kafka traffic in one single gateway solution.

If we take a step back and think about the goals that the Kubernetes Gateway API is trying to achieve, and add to that the increased need to manage Kafka in the same way as HTTP APIs, the idea of extending the Gateway API to natively support Kafka traffic starts to make a lot of sense. To make the idea more tangible, let’s deep dive into what the Gateway API KafkaRoute extension actually looks like, and how it brings the Kafka Gateway pattern into the realm of cloud-native networking.

Introducing KafkaRoute: A Gateway API Extension for Kafka

The idea of the KafkaRoute API is to extend the Kubernetes Gateway API model to provide a standardized, vendor-neutral way to route and control Kafka traffic. Similar to how HTTPRoute manages HTTP traffic, KafkaRoute allows administrators to define how Kafka clients interact with brokers while enforcing policies related to routing, security, access control, as well as some transformations.

KafkaRoute shares many of the same key concepts and functionality as HTTPRoute, but also includes some variants to existing concepts as well as some entirely new ideas that are very specific to the Kafka protocol.

GatewayClass, Gateway, and Parent References (parentRefs)

Let’s take it from the beginning of the journey of a Kubernetes Kafka administrator, who first needs to decide what Gateway they want to use. Because naturally, the KafkaRoute resource must respect the Gateway API’s design principle according to which the configuration of a KafkaRoute is a separate concern to the definition and configuration of the underlying gateway.

The first step to setting up a gateway to serve KafkaRoutes is to define what gateway implementation (or class) will actually be used. Here we are referencing a hypothetical gateway called eventstreamgateway.io.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

kind: GatewayClass apiVersion: gateway.networking.k8s.io/v1 metadata: name: api-gtw spec: controllerName: eventstreamgateway.io/gatewayclass-controller

[/dm_code_snippet]

Now we can define the Gateway, which is like an instance of the GatewayClass.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: api-gtw

spec:

gatewayClassName: api-gtw

listeners:

- name: kafka

port: 9092

protocol: TLS

hostname: "*.kafka-apis.acme.com"

tls:

mode: Terminate

certificateRefs:

- group: ""

kind: Secret

name: gateway-kafka-tls

[/dm_code_snippet]

This Gateway defines a Kafka entry point for clients using the Kubernetes Gateway API. It listens on port 9092 with TLS termination, meaning it handles secure client connections before forwarding traffic to backend Kafka brokers.

A Simple Kafka Route

Next, we can define the simplest possible KafkaRoute, which we’ll later build upon with other fancy features.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

apiVersion: gateway.networking.k8s.io/experimental

kind: KafkaRoute

metadata:

name: kafka-app-1

spec:

parentRefs:

- name: api-gtw

backendRefs:

- name: my-kafka-service

port: 9092

hostname: my-kafka-route

[/dm_code_snippet]

This KafkaRoute does not include any rules, filters, or policies of any kind, it simply exposes an entire Kafka cluster to clients that are able to reach this host and port.

The parentRefs field connects the KafkaRoute to a parent Gateway, just like with HTTPRoute. This ensures a separation of concerns between platform operators who configure infrastructure (Gateway) and developers who define application-specific routing (KafkaRoute).

Unlike HTTPRoute, which routes requests to individual backend services, KafkaRoute proxies an entire Kafka cluster. Therefore, the backendRefs field specifies the Kafka services and ports (in the same way that you would set the bootstrap server for your Kafka Client), ensuring the gateway acts as an entry point to the entire Kafka cluster, rather than a single service like HTTPRoute does. This is why for KafkaRoute, the backendRefs is moved to the root of the spec.

Kafka clients require a bootstrap server URL to initiate communication. The hostname field allows operators to define a custom subdomain for Kafka clients, allowing external access to select that route while maintaining a structured DNS-based approach.

The brokerMapping parameter is not explicitly set here and is left to a default value. Kafka clients need to communicate with individual brokers within a cluster. The brokerMapping field defines how to translate Kafka cluster brokers’ hostnames into domain names that are configured to target the Kubernetes cluster, ensuring clients can seamlessly resolve broker addresses based on metadata provided by the upstream Kafka cluster.

One last noteworthy thing is that multiple KafkaRoutes can refer to a single Gateway, and that KafkaRoutes on a Gateway can point to the same or different upstream Kafka clusters. This provides a lot of flexibility when it comes to determining the access controls and other policies that are applied on the KafkaRoutes. We’ll take a look at examples of KafkaRoute policies in the next couple of sections.

Topic matching and topic mapping

Let’s extend this simple KafkaRoute with a topic mapping policy.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

apiVersion: gateway.networking.k8s.io/experimental

kind: KafkaRoute

metadata:

name: kafka-app-1

spec:

parentRefs:

- name: api-gtw

backendRefs:

- name: my-kafka-service

port: 9092

hostname: my-kafka-route

filters:

- name: mapping

type: TopicMapping

topicMapping:

client: orders-external-topic

broker: orders-internal-topic

[/dm_code_snippet]

As you can see, I’ve added a filter called TopicMapping. This maps external topic names to internal ones, allowing you to abstract away the complexity of internal Kafka topic names, and providing more user-friendly topics to external clients.

ACL policies

Access Control Lists (ACLs) let you define fine-grained permissions for operations on topics, clusters, and groups.

Let’s add an ACL filter policy to our KafkaRoute.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

apiVersion: gateway.networking.k8s.io/experimental

kind: KafkaRoute

metadata:

name: kafka-app-1

spec:

parentRefs:

- name: api-gtw

backendRefs:

- name: my-kafka-service

port: 9092

hostname: my-kafka-route

filters:

- name: mapping

type: TopicMapping

topicMapping:

client: orders-external-topic

broker: orders-internal-topic

- name: acls

type: ACLs

acls:

- name: topic-match

type: Topic

pattern:

type: PREFIX

expression: "orders"

topicOperation: Read

[/dm_code_snippet]

Now we’ve extended the behaviour of this KafkaRoute, such that any interactions with topics that start with the string orders will only allow for read operations to be made. In a similar way, ACLs can be defined for clusters, consumer groups and tokens.

Topping Mapping and ACLs are just two of many ways KafkaRoute can be used to secure, shape, and govern Kafka traffic in Kubernetes. Quotas and transformations are other examples of policies that will fit nicely into this spec.

Extension filters

Similarly to HTTPRoute, KafkaRoute supports an extension mechanism so that vendors can implement filters that are specific to their implementations. The example below illustrates this with a KafkaHeaderPolicy extension supported by the hypothetical implementation by eventstreamgateway.io.

[dm_code_snippet background=”yes” background-mobile=”yes” slim=”no” line-numbers=”no” bg-color=”#abb8c3″ theme=”dark” language=”php” wrapped=”no” height=”” copy-text=”Copy Code” copy-confirmed=”Copied”]

apiVersion: gateway.networking.k8s.io/experimental

kind: KafkaRoute

…

filters:

- name: transform

type: ExtensionRef

extensionRef:

group: eventstreamgateway.io/v1alpha1

kind: KafkaHeaderPolicy

name: add-header

---

apiVersion: eventstreamgateway.io/v1alpha1

kind: KafkaHeaderPolicy

Metadata:

name: add-header

spec:

add:

X-Custom: Hello

[/dm_code_snippet]

This transformation adds the header X-Custom: Hello to messages passing through the gateway.

KafkaRoute is a big step forward for cloud-native Kafka practitioners

As you can see, the KafkaRoute makes it very easy for Kubernetes and Kafka administrators to manage access to their Kafka clusters in a way that is both cloud-native and Kafka protocol-aware. The details of how security and authentication work, both on the backend side and the client side, is beyond the scope of this brief article. But in essence, the KafkaRoute allows Kafka to be securely exposed to consumers outside of the Kubernetes cluster, leading to new opportunities to leverage the valuable data within.

You might be asking yourself how this idea relates to other cloud-native projects like Strimzi or Knative? The short answer is that they are highly complementary. Strimzi can be used to deploy, operate and configure a Kafka cluster running on Kubernetes, while the Gateway API’s KafkaRoute can focus on access control and governance, including with clients outside of the cluster. Knative on the other hand is fairly orthogonal to the KafkaRoute, it focuses on integrating event sources and applications together and can leverage Kafka as either an event source, sink, or underlying backbone for the Knavite Broker. Knative is not able to directly process and proxy the native Kafka protocol.

As mentioned earlier, this entire approach is based on the idea that gateways that implement the Kubernetes Gateway API can also act as proxies for the Kafka protocol. This is an emerging pattern, sometimes referred to as Event Gateways, Kafka Gateways, or Kafka Proxies. There are also solutions looking to address both HTTP and Kafka traffic within a single solution. All of the vendors in these areas are good candidates to implement this KafkaRoute API.

By extending the Gateway API paradigm to event-driven architectures, KafkaRoute ensures that Kafka can be managed, secured, and exposed in a cloud-native way. Just as HTTPRoute modernized API gateway traffic management, KafkaRoute brings self-service discovery, security policies, and operational consistency to Kafka ecosystems—without locking users into vendor-specific implementations.

If you’re interested in learning more about API and event stream management, and the new KafkaRoute, please join me and the https://www.gravitee.io team at our KubeCon London booth during the first week of April 2025.