Author: Marco Mangiulli, CIO and Head of Software Development at Aruba SpA | CEO and CTO at ArubaKube

Bio: Marco Mangiulli is CIO & Head of Software Development of Aruba S.p.A, and member of the Board of Directors of Aruba PEC S.p.A. For 7 years in Aruba, he has been Head of Software Development, leading the design and implementation of Web Hosting, Domain Registration, Cloud Computing, eSecurity and Trust Services. Since July 2023, he has also been the CEO and CTO of ArubaKube, a spin-off of the Polytechnic University of Turin and a centre of excellence for Cloud Native development.

Why integration testing?

Integration testing is vital for any cloud-native solution, ensuring the smooth interoperability of the product across various components and environments. It helps identify and address potential issues, such as regressions during feature development or compatibility challenges with underlying technologies like Kubernetes distributions or Container Networking Interfaces (CNIs). These tests are crucial for maintaining a consistent and reliable user experience, verifying that the product performs reliably under diverse conditions.

Our experience

We are the developers and the maintainers of Liqo, an open-source project that enables seamless Kubernetes multi-cluster infrastructures. It allows users to create a single Kubernetes (virtual) cluster spanning multiple environments, such as public clouds, on-premises data centers, and edge environments.

In our case, we need different Kubernetes clusters with various configurations to perform our test matrix for each commit or release and be sure that the handled pods can run on multiple setups and that the network interconnection is stable.

The problem

We need to test several cases in this scenario to achieve our goal. These could vary depending on the project features:

- check the compatibility with current and previous Kubernetes API versions

- compatibility with different OSs and Linux distributions.

- compatibility with different container runtimes.

- compatibility with different storage providers.

- compatibility with different CNI plugins.

- compatibility with related project versions (e.g., Knative, MinIO, Argo, and others).

For these reasons, tests are resource-hungry since requiring multiple full Kubernetes clusters with multiple nodes to be executed.

Usually, simple integration testing requires large virtual machines to run on KinD-provided clusters (see next section), requiring constant processing power even when no test is running. This approach wastes resources, as the VMs consume a significant quantity of energy and computing power and need to be allocated during the entire day.

Furthermore, KinD does not create a real cluster but containers that simulate its behavior. Fortunately, more efficient and cost-effective ways exist to perform integration testing, such as containerization or cloud-based testing solutions that provide better flexibility.

How we solved the problem

GitHub Action Runners Controller

The GitHub Action Runner Controller is a great way to limit resource consumption when running continuous integration (CI) tests running them inside Kubernetes Pods. Depending on the workload, these runners can scale up or down their number and resources used to run tests. This flexible behavior helps to ensure efficient resource usage and to complete tests on time. Additionally, GitHub Actions can define a test matrix and create a running job for each parameter combination.

But how do we deploy our Kubernetes clusters? We explored two possible solutions: KinD and Cluster API.

KinD

Kubernetes in Docker is a system that allows you to deploy and run Kubernetes clusters inside Docker containers, using them as nodes, and, of course, it was the first choice to create clusters for our CI jobs quickly. But we met the first big stopper: KinD requires full-node permission to bootstrap clusters, limiting the multi-tenancy with other teams in the testing environment and introducing some significant issues for the underlying cluster’s security. Second, even if we grant these permissions, we had some not-well-identified issues where our CI pods were randomly killed in out-of-memory (OOM), although we had a lot of free memory in our physical nodes. These two reasons forced us to find a different way to go.

Cluster API

Cluster API is an open-source Kubernetes project that provides declarative APIs and tools to simplify the deployment, management, and operations of Kubernetes clusters. Cluster API is designed to be cloud-agnostic, allowing users to deploy, manage, and operate clusters on different cloud providers. However, Cluster API is not enough; we also need something else that provides the resources required to create our clusters.

We identified this component in KubeVirt, an open-source project that enables users to run virtual machines on top of Kubernetes clusters. It provides an API for managing virtual machines and storage. Hence, we can leverage Cluster API with KubeVirt to create, manage, and operate virtual machines on our bare-metal Kubernetes cluster. Since Cluster API can also be used to define the desired state of the virtual machines and KubeVirt will ensure that the desired state is maintained, we were able to operate the VMs to set up full Kubernetes clusters with Kubeadm in a few seconds, ready to be used for our integration tests.

With this new environment, we have clusters made of real Virtual Machines that behave very similarly to clusters you can find in any other cloud environment. More importantly, we had no more docker-in-docker-related issues!

Additionally, ClusterAPI provides a standard layer that can improve the portability of the tests (simply by changing the infrastructure provider) and make them run on different providers, such as AWS, GCP, Azure, or bare metal.

Proposed solution using ClusterAPI-provided clusters with KubeVirt

Conclusion

In conclusion, building an integration testing pipeline with Cluster API provides a robust and cost-effective method for testing cloud-native projects.

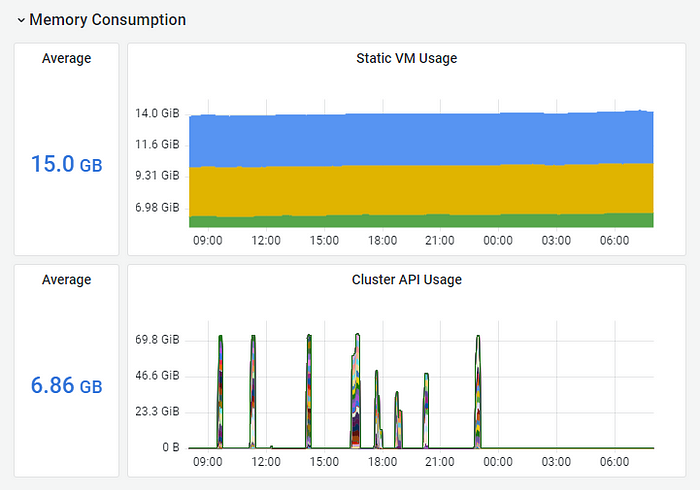

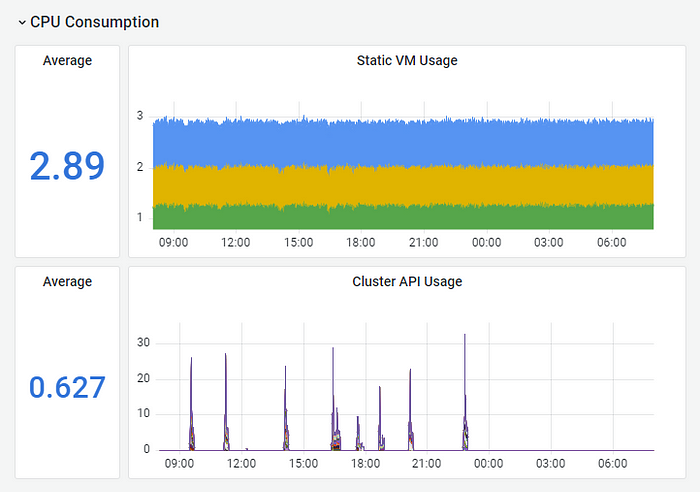

Our Grafana dashboard monitors how CPU and memory utilization has changed with this new testing environment.

Daily consumption of memory for three runners. Each one runs two Kubernetes clusters. (6 k8s clusters in total)

Daily consumption of CPU for three runners. Each one runs two Kubernetes clusters. (6 k8s clusters in total)

As expected, the graphs show that we have resource consumption only when performing tests; for the remaining part of the day, the consumption is zero. We had an average reduction of more than 50% in memory consumption during the day and more than 70% in CPU consumption, even if we moved from two nodes KinD clusters to three nodes full-fledged Kubernetes clusters.

###

To learn more about Kubernetes and the cloud native ecosystem, join us at KubeCon + CloudNativeCon Europe in Paris from March 19-22.