Author: Dan Finneran, Principle Community Advocate, Isovalent

Bio: Dan Finneran is a Principle Community Advocate at Isovalent. His journey to today has included bare-metal, jails, zones, vms and containers where he is currently enjoying the fast paced ride in the cloud native space. He also created & maintains a popular Open-Source load-balancer for Kubernetes and contributes to upstream Kubernetes. He’s also been fortunate to present at events ranging from the British computing society, HPE Technical solutions summit to DockerCon and KubeCon amongst others.

The humble computer network is often overlooked, sometimes invisible to the eye (with WiFi) but is ubiquitous with our daily lives. As we stream video, access bank accounts and message friends and family, packets of information are being switched and routed from various locations where it is assembled into something that makes sense for the end user.

The maintainers of these networks have quietly racked and stacked large networking equipment, cabled it together end to end and have the daily task of maintaining the configuration and health of these networks. The networking technology sector has continually been evolving, first growing to allow more devices to become part of these networks to then improving the capacity in order to meet the growing demands of new users. Technologies have been added to create multiple guest networks within existing networks, create encrypted end points between source and destination amongst others all to facilitate the growing needs over increasingly complex network topologies.

The network administrator has not only the daily task of keeping the lights on, but also ensuring that they stay up to date with all of the requirements of their infrastructure whilst also learning about new technologies that may be required as business requirements and the technologies evolve.

The growing requirements on network architectures

Originally network architectures were simplistic, usually focussed on end to end connectivity, with a client usually wanting to retrieve data from a server. However over time the demands placed on the underlying networks has grown, from an explosion in the amount of tenants on networks to the changes in application architectures. Some examples of these new network requirements are:

- Densely populated networks, requiring multi-tenancy technologies

- High performance compute (HPC) creating large demands for data / bandwidth

- High frequency trading, where latency is fundamental

However the migration from physical servers and virtual machines to densely packed container environments (namely Kubernetes) has introduced an entirely new networking architecture.

It is the change towards containerised workloads that is causing a somewhat seismic shift, blurring the lines as to who is responsible for the networking infrastructure.

Bottlenecks appear

This shift has started to blur the lines of responsibility, where previously if a developer or application owner wanted to deploy their workload or applications, they would require input from the networking team. This often would require the networking team finding some available resource (such as an IP address) or even provisioning infrastructure such as a VLAN to host this application. Ultimately this was often found to be a bottleneck, the architecture team would need to consult their IP Address management software (often a spreadsheet) to find some free resource that would need allocating then the rest of the chain of infrastructure may also need updating from VLANs, to routers to firewalls all before the application can be accessed externally. This “firm grip” of ensuring control over the infrastructure is often cited as one of the main reasons that we ended up with the term shadow IT, where effectively people would just go buy some infrastructure elsewhere instead of waiting for the internal process to complete.

Kubernetes has entered the networking chat

The huge adoption of containerised workloads has drastically improved developer and application velocity, largely in part due to it simplifying the deployment model of your applications. The “build it, ship it, run it” is largely achieved by abstracting away the underlying infrastructure, as far as the application is concerned it’s the only thing running and to facilitate this the network also needed to be impacted. To free developers from the concerns of physical and managed networks, the developers of Kubernetes simply created a “new” network. This network runs within the Kubernetes cluster and is shared between all the nodes that are part of the cluster. Suddenly developers are no longer beholden to someone assigning resources on the physical network, their applications can leverage an internal cluster network enabling them to develop, test and scale with minimal friction.

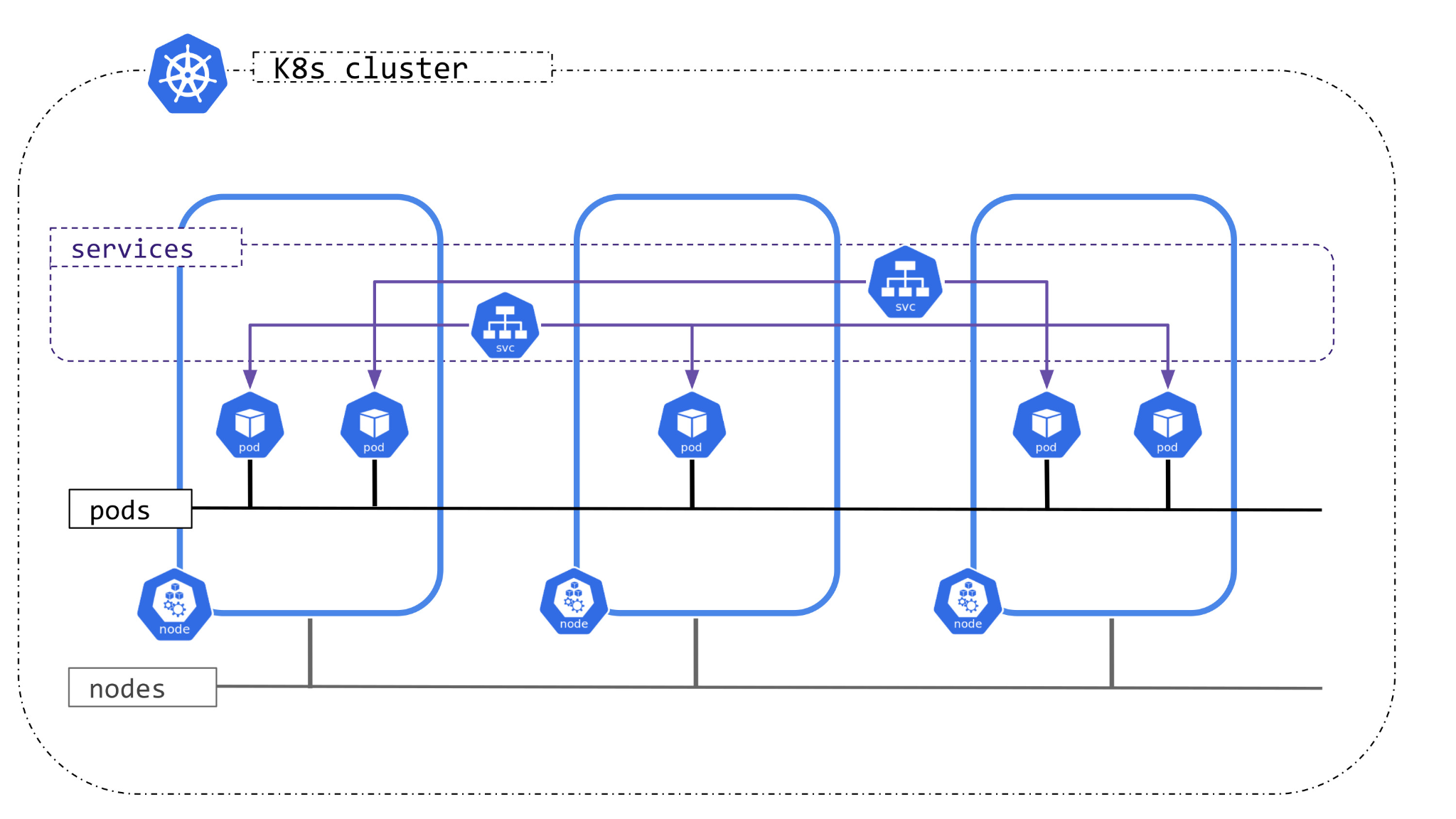

Within the cluster there are three networks that people need to concern themselves with:

– Pod network, the internal network used to expose applications within a pod to one another

– Services network, the network that is used to expose a group of applications either to other applications or to the outside world

– Node network, the network that the cluster nodes are actually connected to (usually managed by the networking team)

The burning question remaining is the mechanism for exposing these applications on the internal pod network to the outside world and in cloud environments, this can simply be done by provisioning cloud infrastructure such as a load-balancer that will redirect traffic from a globally accessible external address to a service within the Kubernetes cluster. When we think of physical or on-premises infrastructure then this suddenly can become a lot more nuanced and is entirely dependent on the architecture and features available to the underlying network.

What next for network operators?

As we’ve covered, networks are ever evolving pieces of infrastructure and have to continually grow in speed and capacity to meet the growing demands placed upon them. However with demands from application owners and developers to move faster a new architecture of embedded networks within networks has started to become commonplace all bringing with it a range of new questions?

– Who architects the networks for cloud native applications?

– Who owns and troubleshoots these networks?

– What processes need to change in order to create connectivity from the underlying physical network to applications within these embedded networks?

Additional to all of this confusion about responsibility and ownership comes the new technical acumen required in order to comprehend what is happening within the cluster. How does the existing tooling work when troubleshooting networking problems and if not what tools are available?

Getting up to speed

With all of these challenges on the horizon it may be difficult to determine which resources are available.

For those that prefer to be as close to the real thing as possible and maintain full control over the cluster then Kubernetes in Docker (KinD) makes for a fantastic local environment to learn and practice troubleshooting.

For an out of the box experience that is designed to provide an end to end scenario with clear goals in mind then there are a number of lab environments that provide a fantastic learning experience. The Isovalent lab platform provides a huge library of scenarios and technologies that an end-user can benefit from.

Finally, for those that feel that they have successfully taken the leap into cloud native networking, the upcoming Cilium certified associate exam will cover both Kubernetes networking and various Cilium specific features.

###

To learn more about Kubernetes and the cloud native ecosystem, join us at KubeCon + CloudNativeCon Europe in Paris from March 19-22.