Guest: Jorge Palma (LinkedIn)

Bio: Jorge Palma is the Principal PM Lead for AKS (Azure Kubernetes Service) where he serves thousands of customers and mission critical application and helped lead the service to become the fastest growing service in Azure’s history. Formerly he was the Technical Lead for App Dev and DevOps with the Azure Global Black Belt team and has implemented and architected solutions in Azure since 2012, spanning across different roles from Services, Tech Evangelism and Tech Sales.

Introduction

In today’s world, sustainability is no longer an option, but a necessity. As we face the challenges and environmental impacts of climate change, it has become increasingly clear that we need to take action to reduce the impact on the planet. This, coupled with new policy and compliance requirements in many regions, has led to a growing interest in sustainability across industries and organizations.

For the software industry in particular, there is a growing impetus for sustainability in application development. As more and more applications are being developed and deployed, there is increasing awareness of the subsequent environmental impact of these applications and their underlying infrastructure.

Enter cloud-native development. Cloud-native development patterns, such as dynamic scaling, and use of cloud-native technologies, like Kubernetes, allow organizations to easily manage their workloads and resources. And while these patterns and technologies have historically been used to manage costs and improve development velocity, these cloud-native patterns and approaches can also be used to optimize for more sustainable application development.

We can also go one step further and specifically design cloud-native workloads that are not only built for infrastructure efficiency but are also carbon aware. In this post, we will look at how to implement carbon aware scaling to help reduce the emissions associated with Kubernetes workloads.

Green software concepts: carbon intensity and carbon awareness

First, let’s start with some level setting. The need for sustainable computing has led to the development of “Green Software” or software that is designed with sustainability in mind. Green software is designed to minimize the environmental impact of computing and reduce the consumption of resources. In the paragraphs below, we will be looking at specific green software concepts as described by the Green Software Foundation.

Carbon intensity: Carbon intensity is a measure of the amount of carbon dioxide (CO2) emitted for each unit of energy produced by a source of electricity. This maps directly to the concept of having “clean” and “dirty” energy. For example, coal is considered to be a “dirty” source of energy because it produces more CO2 emissions. thus resulting it in it having more carbon intensity than other sources (such as wind and solar).

Carbon awareness: Carbon aware applications are applications that have been specifically designed to reduce the carbon emissions generated by their use. By considering the carbon intensity of the energy sources they use, they can adjust their energy consumption to be more efficient and reduce their emissions. For example, an application can be set up to use only renewable energy sources or use fewer resources when the carbon intensity is high. Carbon aware applications can also be used to shape demand for energy by scheduling tasks for times when the carbon intensity is lower.

Demand shaping is a pattern of carbon awareness. It is the process of adjusting the demand for computing resources based on the carbon intensity of the energy source used to power the computing environment.

To learn more about sustainable software, tooling, ecosystems and more, check out the Green Software Foundation.

Making Kubernetes carbon aware using KEDA

So, we want to implement carbon aware scaling to help reduce the emissions associated with Kubernetes workloads. How do we do this?

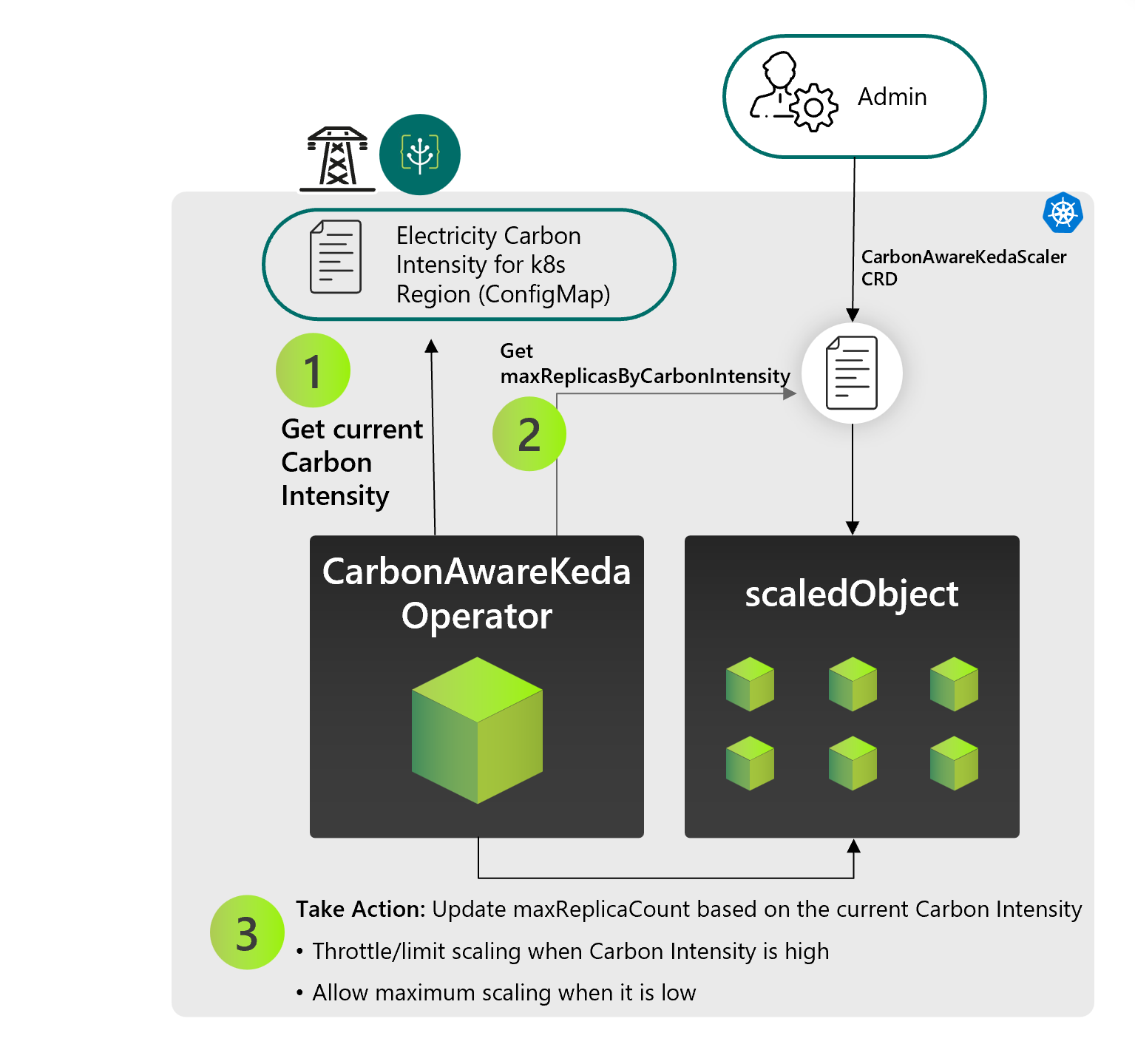

KEDA is a Kubernetes-based Event Driven Autoscaler that allows granular scaling of workloads in Kubernetes, based on multiple defined parameters, leveraging the concept of built for purpose scalers. To build a Kubernetes application with carbon aware scaling, we need to implement demand shaping that scales workloads based on the current carbon intensity of the location where the Kubernetes cluster is deployed. To achieve this using KEDA, you can set up the newly introduced KEDA carbon-aware scaler for your Kubernetes workloads and define your carbon intensity scaling thresholds.

Under the hood, this is implemented as a Kubernetes operator that works by reading from a configMap “carbon intensity” for the current hour, and then updating KEDA scaledObject maxReplicaCount accordingly. Using this method, you can reduce the amount of energy used for your Kubernetes workloads during high intensity periods, which would reduce their carbon emissions.

How to use it?

- Provide carbon intensity data in your Kubernetes cluster:

One way to do this is to use Carbon-intensity-data-exporter, a Kubernetes operator that pulls the latest daily forecast for carbon intensity of the region where your Kubernetes cluster is deployed. This uses the carbon aware SDK from the Green Software Foundation and stores the data into a configMap that will then be consumed by the KEDA carbon aware operator.

Additionally, using the Forecast data and caching it in the configMap brings the benefit of reducing network traffic compared to using real time data that would be called several times a day. Later on you can leverage other sources of data, like customer provider data (such as a cloud provider) to enable KEDA to scale more efficiently and accurately.

- Make your KEDA Scalers carbon aware:Deploy the Carbon Aware KEDA Operator that updates KEDA scaledObjects and scaledJob ‘maxReplicaCount’ field, based on the current carbon intensity.

Source: Green Software Foundation

Source: Green Software Foundation

Workload scenarios that can benefit from carbon aware scaling

Different types of workload scenarios can benefit from carbon aware scaling. Some of these include the processing of data backups, the processing of data analytics, as well as regular development work in testing and staging environments. Scenarios that are not time sensitive and do not need to be done in real time are perfect as they can be executed when the energy is green. A great example would be proactively designing batch processing of workloads to help with scheduling intensive work during low-carbon periods.

Going further with carbon aware computing on Kubernetes

In addition to the carbon aware KEDA operator, there are also several other features that can be implemented to reduce emissions from Kubernetes workloads:

- Time shifting is the concept of planning workload execution during low carbon intensity periods.

- The use of cloud-native technologies, such as serverless patterns, can also help reduce emissions by allowing you to scale workloads on demand and reduce compute usage during times of lower demand.

- Kubernetes can also be configured to use more energy efficient hardware such as ARM processors, which can help reduce emissions.

- Organizations can also choose to deploy Kubernetes clusters in locations with lower carbon intensity levels – i.e., in regions powered by a greater proportion of renewable energy sources.

In summary, carbon aware scaling is a powerful tool which can help organizations reduce the emissions of their Kubernetes workloads. By utilizing the carbon aware KEDA operator, scheduling algorithms, serverless functions, energy efficient hardware, and deploying in lower carbon intensity locations, organizations can significantly reduce emissions and do their part in combating climate change.