Author: Dave Cope, Chief Marketing Officer, Spectro Cloud

Bio: Dave brings with him executive experience from a number of businesses that span from early stage to Fortune 500 technology companies, such as Purfresh, BizGenics, Marimba, Illustra and IBM. Most recently, Dave was and executive at Cisco where he held a variety of executive roles including sales and product management for Cisco’s emerging cloud offerings.

Right now here at Spectro Cloud we’re in the process of building our third annual State of Production Kubernetes report, based on a large-scale survey we conducted with Dimensional Research this summer.

As we head towards KubeCon in Chicago, the data makes for very interesting reading. It illuminates how far Kubernetes has come, and how much there still is to do, in several of the key issues facing our community.

Let’s take a tour of the headlines.

Kubernetes is still too complex for the enterprise

Our theme in last year’s report was all around complexity: how it’s growing and what effects it produces.

Well, a year later and Kubernetes complexity isn’t going away — it’s still stirring the emotions of IT pros across the land:

“Kubernetes is the most frustrating, painful, and beautiful thing I’ve worked with in my technology career.”

Kubernetes is hard enough when you’ve got one cluster to deal with. But the majority of our respondents have more than ten Kubernetes clusters in production, running in multiple clouds and other environments. They run multiple distributions and have many different software elements — monitoring, service mesh, security tools — deployed in their clusters.

Larger organizations and those with more clusters reported greater diversity in terms of how many different distributions and software integrations they use in their clusters. A third of large enterprises use six or more different distributions. 45% of large enterprises have 20 or more different software elements in their stacks.

And most of our respondents expected growth in all aspects of their Kubernetes infrastructures over the coming year, from the number of clusters to the number of applications.

This (growing) complexity has consequences.

More organizations this year than last said they faced issues caused by the complexity of their software stack — and those with more clusters were most likely to suffer issues.

When asked about their top challenges with Kubernetes, most said it’s the difficulty of putting in place enterprise guardrails, such as security.

“When we first started working with Kubernetes, we weren’t working with mission-critical services. As we evolve and move more business impacting applications to the cloud, we’re faced with different problems. We’re tackling new issues around meeting regulations and dealing with latency demands of high performance applications.”

- IT Operations, Decision Maker, Large Financial Company

Given all this complexity and growth, and the issues that result, it’s perhaps no surprise that respondents said they spend too much time on reactive tasks like troubleshooting and patching, and not enough time on innovation and strategy.

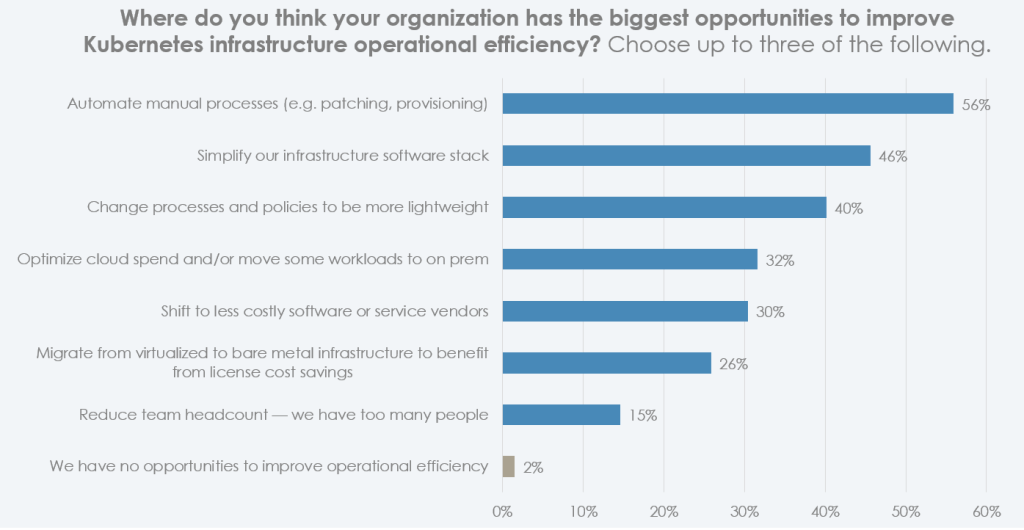

What are they going to do about this situation? For many, automation was the clear solution — after all, if you can provision or patch with a click instead of lots of manual CLI work, you free up precious time.

Complexity? It’s the devs that are suffering most

As we’ve explored in previous blogs, developers are often the ones most affected by the complexity of Kubernetes.

Our respondents agreed wholeheartedly (93%) that devs shouldn’t need to be Kubernetes experts, they should spend their time coding. No surprise there.

“There is complexity for developers when deploying to Kubernetes. They have to think about which parts of the application talk to each other. Then DevOps has to make sure the developers are isolating workloads so it doesn’t mess with anything else living in a cluster. Kubernetes enables us to do a ton and have this amazing flexible ecosystem, but it adds layers and layers that everybody needs to think about. And developers traditionally haven’t done much of that kind of thinking.”

- DevOps, Practitioner, Mid-size Logistics Company

But the reality? 30% said their dev teams are still spending too much time configuring Kubernetes instead of focusing on app development. 83% also noted that ops teams struggle to serve the unique needs of different dev teams for Kubernetes clusters. It’s the age-old tension, and one respondent expressed it well:

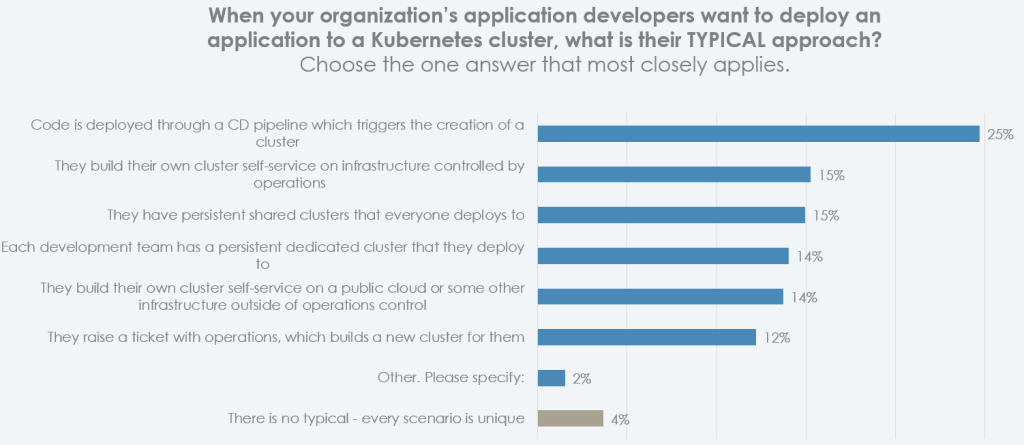

“There is a cycle of developers feeling they’re being slowed down because of a ticket or waiting for a cluster, so they try a self-serve approach. But then teams that are supposed to write code to add value to a company are doing operations tasks that they’re not the best suited for since they don’t have the expertise, and the pendulum swings back to operations to deliver clusters.”

- IT Operations, Decision Maker, Mid-size Retail Company

From this perspective, it makes sense that there’s no standard approach for developers to get access to the clusters they need. Every organization does it differently.

We’ve seen many new solutions emerge that promise to make building and deploying apps for Kubernetes simpler for developers. Indeed, three quarters of our respondents are investigating these solutions — although few are using them widely today, and 14% have abandoned the experiment. Perhaps more work is needed before these platforms are sufficiently mature to add the value that organizations expect from them.

What are we going to do with all these VMs?

Our research last year and this made it crystal clear: organizations that use Kubernetes in production have committed to the cloud native ecosystem for their applications going forward. But there are still millions of legacy VMs out there in the wild, and they’re not going away any time soon.

We asked respondents what their plan was for these VM-based applications.

“We’re pushing hard to containerize everything and I would call us container-first. But we have a huge existing pool of applications running in VMs, and we’re working through what to do with each of them. There are times we’ve recommended remaining on VMs for certain applications. Five years ago, I wouldn’t have gone that direction, but as crummy as it feels, sometimes it makes sense.” – DevOps, Practitioner, Large Education Organization

So it’s clearly not a case of ditching VMs entirely and refactoring workloads into Kubernetes-native containers. Nor is it ideal to keep a second VM environment around. In fact, 86% say they would like to unify their containerized and VM workloads on a single infrastructure platform. 23% went as far as to say that “support for both VM and containerized workloads” is one of their top-three considerations when choosing a solution to manage Kubernetes infrastructure — although this is probably more of a wish than a concrete plan. Very few of those we interviewed were aware of solutions like KubeVirt, and those that had tried it were clear that it needs enhancement to be ready for enterprise use.

There’s a sense of urgency to this VM issue, with Broadcom’s acquisition of VMware getting approval, and reports of increasing prices for solutions like vSphere. 63% of our respondents said their costs for running virtualized infrastructure (e.g. annual VMware licenses) are “no longer sustainable”.

In fact, cost is proving a driver for some other emerging infrastructure changes. When asked about opportunities for improving efficiency, 32% said “moving some workloads back to on-prem” was in their top three tactics, and a further 26% said they were “investigating moving to bare metal to save on license costs”.

Perhaps all those news stories about moving back from the cloud are not just puff pieces.

Edge is ready for its tipping point

Last year we asked organizations if they were doing Kubernetes at the edge, and around a third of respondents said they were — albeit with a host of tough challenges to overcome along the way. This year, we dug a little deeper.

93% of Kubernetes adopters said they are considering edge computing initiatives. But only 7% are in full production today, with a total of 49% having some kind of active trial or deployment overall.

64% expect their use of edge to grow over the next 12 months, with a marked increase in ‘strong growth’ versus last year’s survey.

What’s driving this growth? In our interviews, many IT leaders raised the potential that AI workloads offer at the edge.

“We’re doing some initial work with AI and ML applications, and we are seeing real potential for doing those on edge devices. But it’s still early days.”

- IT Operations, Decision Maker, Large Financial Company

We asked last year, and again this year, what organizations thought was challenging about doing edge Kubernetes.

It seems some of the foundational technological issues have evaporated a little this year. It’s no longer so difficult to find the right edge hardware, for example. But the “we’re live, what now?” challenges have grown.

“When we started with our edge computing initiative, our issues were very much related to the basics of dealing with remote devices. Those were solved with a bit of time and experience, and we found a toolset that was right for our needs. Now we’re figuring out more of the issues of enterprise management and scaling.”

- IT Operations, Decision Maker, Mid-size Transportation Company

We saw a big jump in compliance and security, cited by 62% of our respondents. Day 2 operations, the cost of field engineering visits, and the challenges of connectivity at the edge all saw big spikes.

Stay tuned for the full story

We’ve just given you the briefest whistlestop tour of a rich dataset, based on a robust survey of 333 Kubernetes professionals. For deeper analysis, excerpts from interviews and much more, stay tuned for the full report, launching at KubeCon. To make sure you don’t miss it, sign up here.

Join us at KubeCon + CloudNativeCon North America this November 6 – 9 in Chicago for more on Kubernetes and the cloud-native ecosystem.